-

Posts

28,257 -

Joined

-

Last visited

-

Days Won

402

Content Type

Profiles

Forums

Events

Everything posted by robcat2075

-

It has a thread with instructions and samples stickied for it... https://www.hash.com/forums/index.php?showtopic=33795 I've never installed the 2008 rig so i'm not sure what is involved but don't recall anyone saying the process didn't work anymore. I suggest trying it out on a simple test case which is what one might do anyway to learn a new rig.

-

I always save old installers, with a version number, just in case there's a reason to roll back

-

Great looking character head!

-

How did you get to that screen if A:M isn't running? Are you installing v19d to some directory other than the one v19c was in? Normally they overwrite to the same directory. Is this the first v19 you've installed and run? Then you can copy the master0.lic file from your v18 directory to your v19 directory If you still need an activation code and don't have the email that contained your activation code you can log into your account at Hash.com/store, then go to My Account at the top, and "view" your most recent purchase of the A:M sub. The "Order Information" will show your activation code.

-

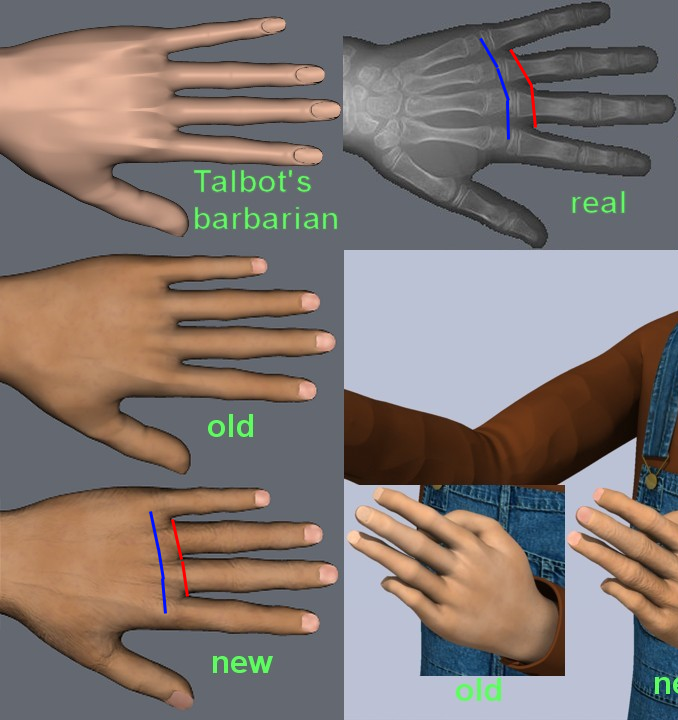

Yes. It's a subtle change but I think it makes for a more organic-looking hand. Small detail... notice the difference in the shape of the thumb webbing. It occurs to me that I have misidentified the axis of rotation with my blue line. If the first Phalanges bone slides on the stump of the Metacarpal then the apparent axis is probably inside the stump rather than in the gap between the two bones.

-

If you think you had it bad... The Four Yorkshiremen

-

Hands certainly have come a long way since barbarian days. I still think you could go bigger on the arc of the knuckles and either move them farther into the hand or move the webbing farther out.

-

Can you post a sample PRJ with that set up?

-

You could paste that result into the alpha channel that I discuss above. This would save having to number the patches to identify them.

-

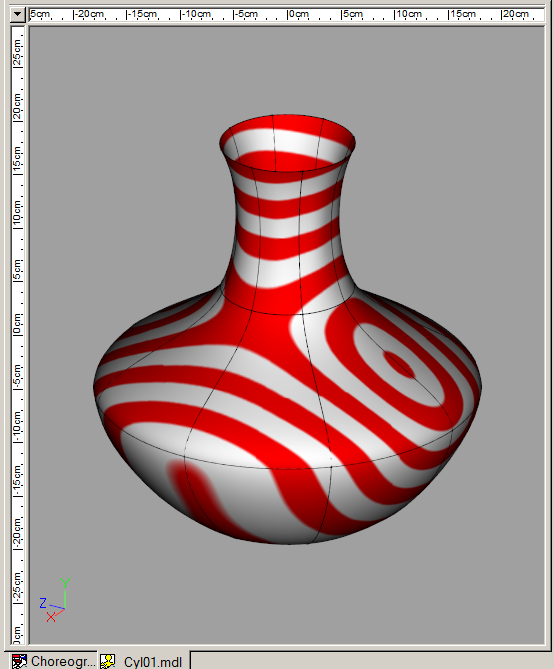

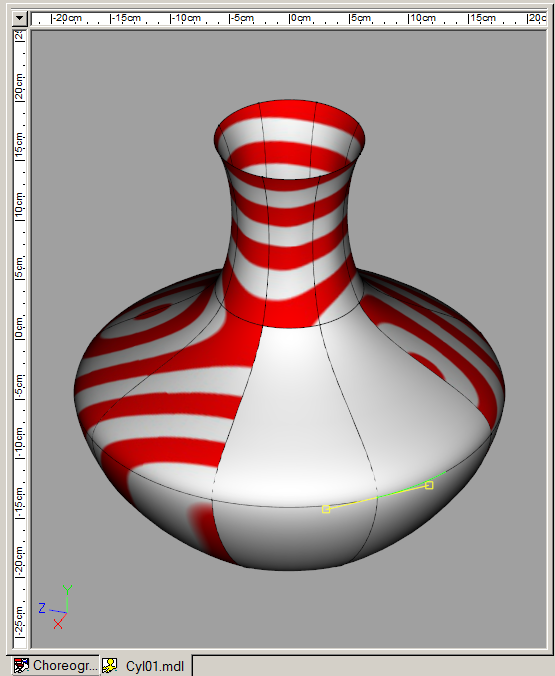

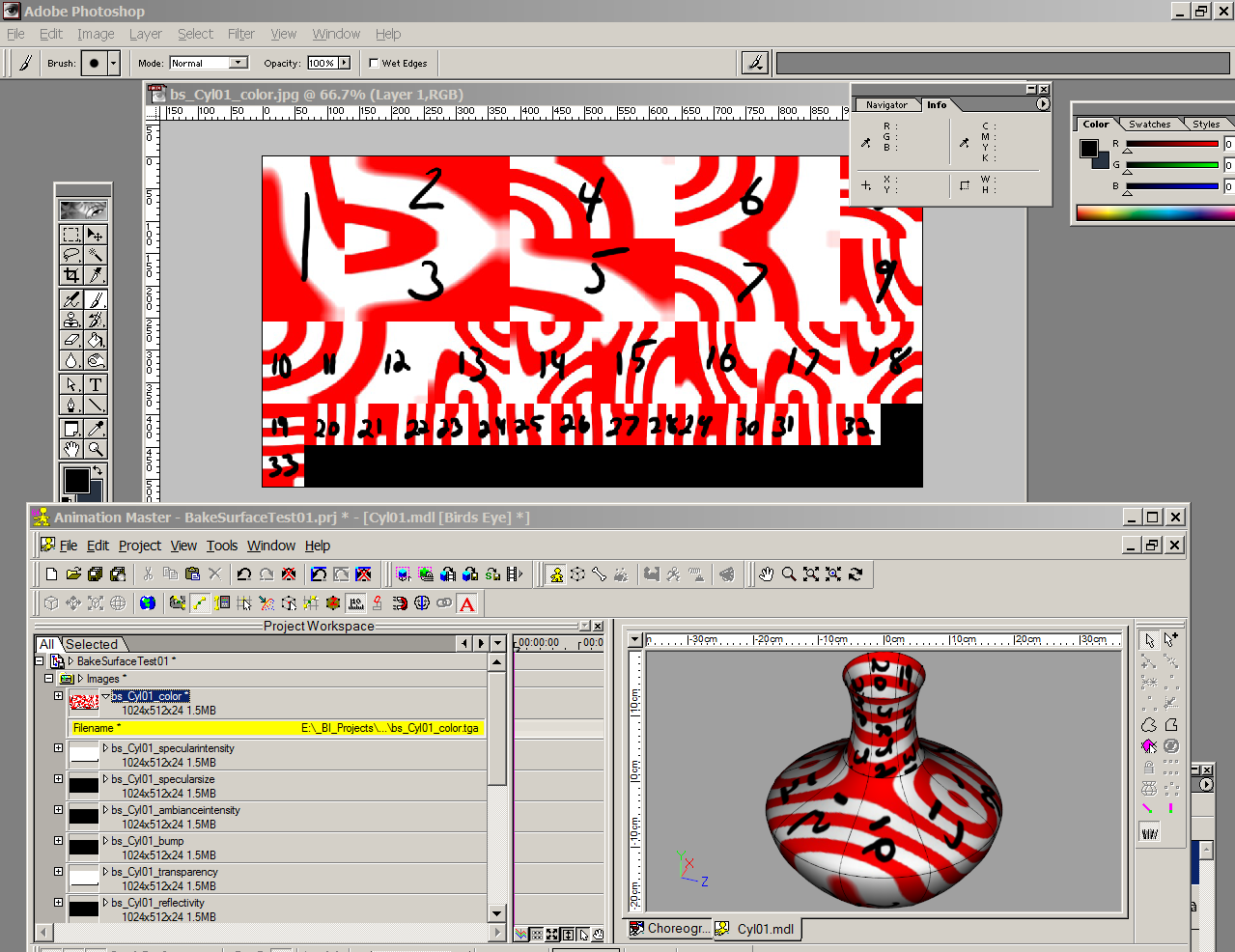

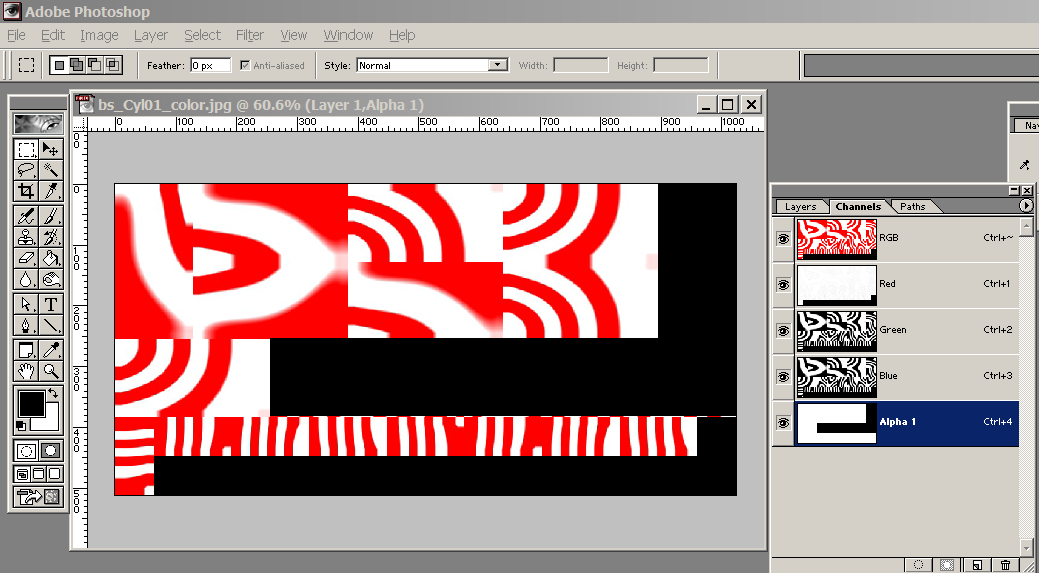

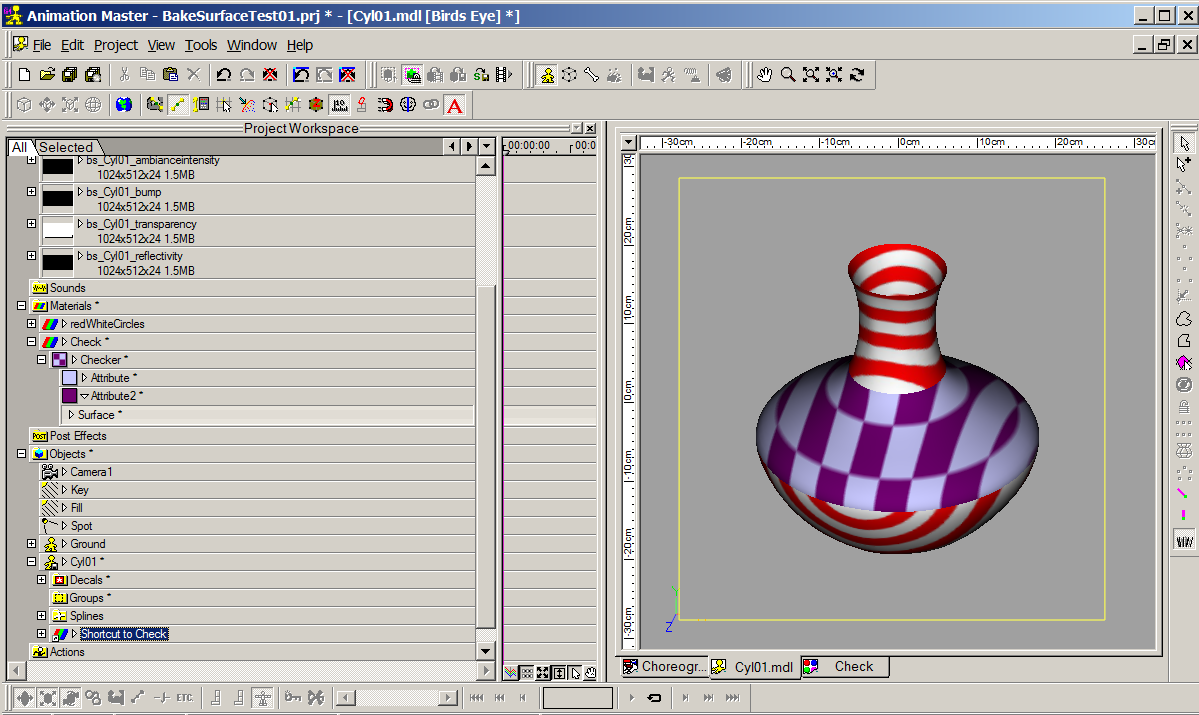

I don't think there is a way to just bake part of a model. Here are some work-around ideas... - If the selection is a self-contained portion of the model, not connected to any other part... cut and paste it into another model window. Bake it there, then copy and paste it back into the original model. -If you don't mind having some wasted bitmap space... bake the whole model like this... and then remove the baked images from from parts of the model by detaching and reattaching CPs that are adjacent to patches you don't want baked. Detaching and reattaching one CP cleared four patches... -An alternative to the above... it might be possible to remove the decal assignments to certain patches by text editing the MDL file rather than manually disabling the patches. I have not investigated that. -Crazy idea... the surface maps are all baked as 24-bit maps. In a paint program you could add an alpha channel to those and paint black on that anywhere you don't want the baked surface to show. If you hold down the SHIFT key when you choose "Bake Surface" you can set "Margins" to some large value like 10 so you don't have to be pixel accurate in the areas where wanted and unwanted patches adjoin I baked the surface, then in PS I temporarily wrote a number on each tile and swapped that new version with the original in A:M so i could see how patches fit to the model... After looking at that I figured that if I wanted to remove the baking from the middle patches, I needed to hide 8,9, and 13-18. I painted black on the alpha channel under those tiles.... ... then saved and swapped again. Here is the model with the edited baked surface on it. I added a new Checker Material on the whole model. It shows that the baked surface is no longer visible on the middle patches.

-

Hmmm... i presume hiding the unwanted portion does not get you that, right?

-

Are you installing to the default directory?

-

They're definitely pushing it on that "Beneficial TOOT TOOT" sound mark. Several of those are so brief I have to wonder if they would hold up to a legal challenge.

-

I had not noticed the "Use for new cameras" setting!

-

An app that searched an A:M Chor for any and all keyframes could make a list their times, eliminate the duplicates, and present that list to you, to be pasted into the custom frame range. That would be possible with the "string" tools that most languages have.

-

At the blocking stage you don't do final renders, and the difference in time saved by excluding repeated frames from a shaded render will be negligible. If you did want final renders at the blocking stage you could give A:M a custom list of frame numbers to render in the frame range parameter.

-

Consider this... what is the prediction doing that makes it faster than the render? It must be leaving something out. That thing left out is what makes the prediction not 100% accurate. In a render with a half million pixel, even a 1% error of pixel wrongly rendered would be noticeable and not useable.

-

I can answer one of these... No, A:M does not. The only test that could ascertain if a pixel in frame 2 is exactly the same as in frame 1 is to fully render it and compare. A faster shortcut test that worked would need to determine every aspect of that pixel to be of any use as a test for this purpose. If it did that, it would also have all the information needed to correctly write that pixel to the image file... IOW it would be rendering the pixel. If there were a faster way of rendering the pixel, that would be used as the renderer. Also, just sampling some pixels in advance won't cover 100% the cases of where pixels could change from frame to frame. Less than 100% would lead to omissions of things that should have been rendered but were presumed to not need to be rendered. If there were some accurate test that wasn't rendering... it would still have to be not just faster per pixel but WAY faster than regular rendering to justify spending CPU time on it that could have been spent on rendering. This is a paraphrase of something Martin said when someone asked why the prediction for how long a frame would take to render wasn't more accurate at the start of the render. Basically, the only way to accurately know how long all the pixels in a frame will take to render is to render them.

-

HAve a good trip, Jason!

-

He looks real good, Rodger! Suggestion... make the first and third fingers shorter than the middle finger to avoid the rake look. The apparent difference in length actually begins at the knuckle line in the hand which is curved and angled rather than straight across the hand.

-

That looks like fun!

-

Kevin, This zip has 8 progressively different OBJs in it. TestCyls.zip Load each one into Lumion and tell me when the first one is that comes in with colors and Group names.

-

Hi Jalih! Tell us more about this step. What did you have to do? Nothing fancy, just deleted the imported color map that looked wrong and loaded the original png file from AM project. Then it was just a matter of scaling, positioning the texture and changing the color of the face material to be same as the rest of the head. I think the problem for Kevin is that Lumion has no model editing capability. It's just a renderer. Everything has to work from imports.

-

Hi Jalih! Tell us more about this step. What did you have to do?

-

We need to Skype about this. There is too much getting lost on the forum here.