-

Posts

907 -

Joined

-

Last visited

Previous Fields

-

Interests

Way too many for one lifetime: Animation (especially the animation part!), drawing, painting, sculpture (especially kinetic--moving--sculpures), multichannel audio recording, archaeology, physics (especially the extremes--QM, Relativity), good beers/ales, mountain climbing, hiking, stone tool-making, nature photography, metal working, woodworking, microscopy, ...

-

A:M version

v19

-

Hardware Platform

Win 10

-

System Description

Microsoft Surface Pro 4 - Intel i7 core Will likely add a new server for more serious work later. Note: A:M version 19.0 (not on profile drop-down)

Profile Information

-

Name

Bill Gaylord

-

Location

Stone Mtn, Georgia

Recent Profile Visitors

2,869 profile views

williamgaylord's Achievements

Prolific (6/10)

1

Reputation

-

Probably separate renders matching the six planes of an equirectangular format would be the most straightforward, one camera for each. I could probably develop (or find) a utility to map the renders to an equirectangular file format to allow it to be played on a 3D player. Is there an anamorphic lens option on the camera? It will be interesting to work out how to match the borders seamlessly, if possible. I suppose post processing in a compositing app can be used to correct mismatches in brightness, color, etc., to make the border seamless. In the meantime I can do a normal static camera view and add the 3D audio track rendered as a binaural (two channel) track as an initial demo. I'll likely post the audio on it's own within the next few weeks. I've found a particular humpback whale recording with a distinct echo off the floor of the ocean. I can separate the whale song from it's echo. I'll render the whale calls in specific locations (and varying distances) around the listener and render the echo as bouncing from below and over a wider expanse of directions--should send chills up your spine if it renders well! Stay tuned!

-

Equiangular should be equirectangular in my post. Two formats are the most popular in VR: equirectangular (the most widely supported) is a mapping of a spherical view...360 degrees horizontal by 180 degrees vertical mapped to a rectangle...very easy to store as a normal rectangular picture frame; and cubic which matches 6 element camera views directly, but is not quite so straightforward to store as a file, but not terribly difficult. The fisheye and dual-fisheye are not so popular. In VR there are also plenty of proprietary mappings. I figure a render straight to one of these formats could potentially be more efficient to render...one pass, albeit with more rays, versus rendering multiple views separately, then reformatting the resulting images to construct the VR version. However, rendering multiple views and transforming them outside of A:M is still clearly an option.

-

There may already be such a rendering option, but it would be really cool to have an equiangular or fish-eye, or dual-fish-eye "360 degree" rendering option. Has there been any interest in adding this in A:M? I'm working on binaural 3D audio rendering of whale songs. What is binaural 3D? If you listen to a normal stereo recording with headphones, it will sound like the sound is inside your head. Binaural rendering (if you synthesize the mix) or binaural dummy head recording (live recording with an anatomically realistic dummy head) pops the sound out into space outside your head where it belongs, even though you are listening with headphones. The sophistication with which such recordings can be rendered has improved quite a bit in recent years. Back to whale songs...I can place the whale sounds anywhere in (virtual) space, so you will hear the songs not just around you in the horizontal plane but above...AND below you. With headphones (or virtual reality headgear) that track your head movement, this can also be adapted for virtual reality so you can turn or tilt your head without the sound field turning with your head. It would be so cool to be able to animate whales and render for VR to match the whale songs. Rendering to an equiangular or fisheye video format would allow this to be "prerendered" in a relatively straightforward manner. Playback would be "interactive", but only in the sense of the user being able to control what direction they look. (Same applies to the way I'll render the audio.) Bill Gaylord

-

When I first tried my Wacom stylus pad with A:M on my Windows 10 Surface Pro 4, I got some pretty wacky, annoying behavior (not caused by A:M, BTW). The following tricks cleared up these problems. How to get nice performance from a Wacom stylus pad on Windows 10: A few things to disable: In the search window on the Windows Settings page type "flicks" and select "turn flicks on and off". This opens the "Pen and Touch" configuration window. (For some reason typing "Pen and Touch" only provides you "pen and touch information", which is not the window you want.) On the "Flicks" tab, uncheck "Use flicks to perform common actions quickly and easily"--hope you don't really prefer these gestures to a good Wacom tablet performance. On the Pen Options tab, highlight "Press and hold", and click "Settings". In the "Press and Hold Settings" window, uncheck "Enable press and hold for right-clicking" at the top of the window. Wacom Tablet settings: Now we need to change a setting in the Wacom Tablet Properties. Open the Wacom Tablet Properties window. On the "Mapping" tab, uncheck "Use Windows Ink". You can set the particular pen configuration to your liking. I set the pen touch to "Click", the rocker switch end closest to the tip to "Right Click", and the other end to "Middle Click" (for apps that use a middle button). Note: Pressure sensitivity will need to be enabled and tweaked in the particular application that might use it. Not used by A:M as far as I know. ...and for Adobe Photoshop in particular...in case you use is in support of A:M for textures, etc... Note: If you use a reasonably current version of Adobe Photoshop (and perhaps Illustrator) you will also have to create a text configuration file named "PSUserConfig.txt" and place it in the "Adobe Photoshop CC 2015 Settings" folder under "/AppData/Roaming/" (where is the username folder path designation and where "CC 2015" may be replaced by the designation for your version--essentially find the "Settings" folder). The content of the file should be: # Use WinTab UseSystemStylus 0 Note, that is a zero on the second line. The first line is just a comment (optional). Hope this is helpful! Made my Wacom stylus tablet a dream to work with in Animation:Master on my Surface Pro 4. I suppose someone can pin this, if it proves sufficiently helpful.

-

Thanks! These are all very helpful. What I did in the mean time was add spline rings using the extrude tool. Then I scaled the top surface boundaries to create a bevel...scaling, plus translation to align the contours. Then I just tweaked the control point bias handles for splines perpendicular to the main contours to flatten the surfaces. Probably not the most efficient, but it works nicely when you want to preserve the curvature of the corners of hole and outside corners and produces very precisely flat/straight sides. I was aiming for nice slender (and rounded) bevels, which this produced nicely. Yves Poissant had a nice tutorial on bevels on his website (no longer available)...BTW looks like his last post was some time ago--any news on Yves lately?

-

Quite cool, indeed! Very clever! I kind of miss some of the contests that used to go on. Or just the exercises like the "Pass the Ball". What about one in honor of Rube Goldberg, where each participant adds a new part to the overall mechanism? State a resulting action, like "flip the burger on the grill" or "deposit our dear departed Auntie Agatha's coffin into the grave---gracefully!" and start it with a simple mechanism and each participant adds a new mechanism that responds to the previous one. Each mechanism should be easy to add to a choreography as a working model that uses any A:M feature other than direct frame by frame manipulation: Use relationships, constraints, dynamics, etc., in the cleverest way you can work out so the control is very simple.

-

Chattanooga, Knoxville,Nasheville, Atlanta users?

williamgaylord replied to zandoriastudios's topic in A:M Users Groups

It has been quite a while. Last I saw of anybody from the users group that Colin Freeman used to host in Atlanta was a couple of years ago at Dragon Con. Haven't even seen the Hash crew there for at least a couple of years. I'd be interested. My wife is hoping for an excuse to spend a couple of days in Chattanooga. -

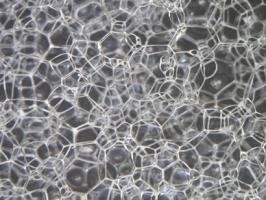

I found a little Java applet demo that is a 2D version of what I want to do in 3D animation. What I would do would be more like a ripple that would look like a knot traced out by a constriction of bubbles or an expansion of bubbles in the 3D bubble foam. This knot pattern would keep its shape and would propagate in a straight path as a knot shaped ripple through the bubbles. Quantum gravity foam...

-

One idea I have is much like George Pal's "Puppetoon" type replacement part animation. I would build the "bubble" animation steps as sort of slices of bubbles that fit together. Most of the replaceable parts would be the same part replicated. The section with the "particle", and the transitional steps nearby, would replace the other "undisturbed" segments, in discrete steps, so it would simulate the propagation of the "particle" through the bubble foam. This way I can build a relatively small number of building blocks that would look random enough, but would be discrete blocks I can re-use easily. Any comments? Any better suggestions? I'm all for saving as much work as possible to get the end result. I think it will work if I build the matching faces of each block, then add bubbles in between to build a complete block. Then I just replicate these and fit them together. Then I would build a series of replacement blocks to simulate the propagating particle.

-

Tell me more about this idea. Can you perhaps do a demo? Would not have to be a whole "myriad"...

-

OOOO! Yes! And that gives me an idea of how to do propagating knots! Cool!

-

I will probably make the "network" the star of the show, though the foam make for a beautiful image. Any suggestions on how to make tubes glow like neon lights?

-

I'm working on an illustration of spin networks in Loop Quantum Gravity, a theory of quantum gravity that seems to be making substantially better progress than String Theory lately. (It is still in the realm of speculation so far, though, just as String Theory is...). The basic idea is to quantize space-time. The result is very much like a "foam" in certain respects (as John Archibald Wheeler first imagined it might be). So...I'm working on a visual model of the foam to help illustrate the basic ideas and principles developed so far. The quantized space is essentially like grains of space packed together like bubbles in a foam. It turns out the two most important quantized aspects are the volume of each "grain", and the area of each "facet" of area shared between two adjacent grains. This is the "low energy" model corresponding to the microscopic (quantum) character of space that leads to the macroscopic character of space-time we experience. (The "high energy" states (pre and barely post "Big Bang") are really--I mean REALLY--bizarre in that every grain becomes adjacent to every other grain, which means the whole idea of "space" breaks down, and only a very abstract "relational" concept survives.) So I will be building a model something like the foam shown in the photo, with little spheres inside each "bubble" to represent each "node", and thin tubes interconnecting each node through each common bubble "facet" of area. One thing I plan to do by lighting sets of these links, is to illustrate how knots and braids that form by connecting chains of these links, lead to patterns that correspond to fundamental particles (photons, electrons, etc.). These continuous strings of links are the "loops" of Loop Quantum Gravity". So...I need to figure out how to make the links glow and how to turn each one off or on in the animation. "Particles" (knots or braids) of "on" links, can propagate as links change state--much like individual LED lights in an LED display can make a pattern scroll across the screen as the individual LED lights turn on or off. A bit ambitious, but I think well within the wonderful capability of A:M!

-

Troll, faerie fly-fishing...

williamgaylord replied to zandoriastudios's topic in Work In Progress / Sweatbox

What about a woven cage something like in the photo? If the fairies are bound somehow before being chucked into the cage, then excape would be less of a problem and a simple lid or opening would make sense. -

Brilliant! You're a genius! I bet you could use this idea to get a "baloon" tire to bulge at the bottom, too! Can you sum two such effects--one for the tread and one for the bulge?