-

Posts

54 -

Joined

-

Last visited

Contact Methods

-

ICQ

0

-

Yahoo

darrin72s

Previous Fields

-

Interests

Android, VB6 DirectX8.1, Civil Engineering

-

A:M version

v17

-

Hardware Platform

Windows

-

System Description

Gateway Laptop w/ Windows 8

-

Short Term Goals

Make a living. Promote website for interests of mutual interest such as fun and learning and seeing interesting things develop.

-

Mid Term Goals

Get a stable location to live at.

-

Long Term Goals

Help with re-development and maintain a website that turns into a viable company that others enjoy seeing successful.

-

Self Assessment: Animation Skill

Knowledgeable

-

Self Assessment: Modeling Skill

Knowledgeable

-

Self Assessment: Rigging Skill

Knowledgeable

Profile Information

-

Name

Darrin

-

Location

Ohio

Recent Profile Visitors

539 profile views

DZ4's Achievements

Apprentice (3/10)

0

Reputation

-

Community Project for Apps/Games?

DZ4 replied to pixelplucker's topic in Work In Progress / Sweatbox

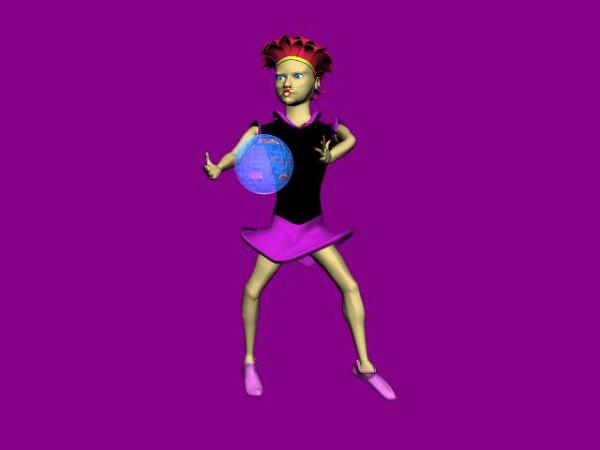

This was a bit rushed, but shows a rough idea of what would show up on a smartphone/tablet, I suppose. Obviously the muscle motion in the face would be time consuming, but I'm not even trying to do that for starters. Actually trying to eliminate the need for muscle motion altogether (...for starters). But she's got a new do, and about to be cloned! Like The Bangles! 4 chicks. Destiny's Child? 3 chicks. Pussycat Dolls? I don't know. Just change the faces and décor, but keep the bone structure so they can dance in sync. That's gotta be huge. -

Community Project for Apps/Games?

DZ4 replied to pixelplucker's topic in Work In Progress / Sweatbox

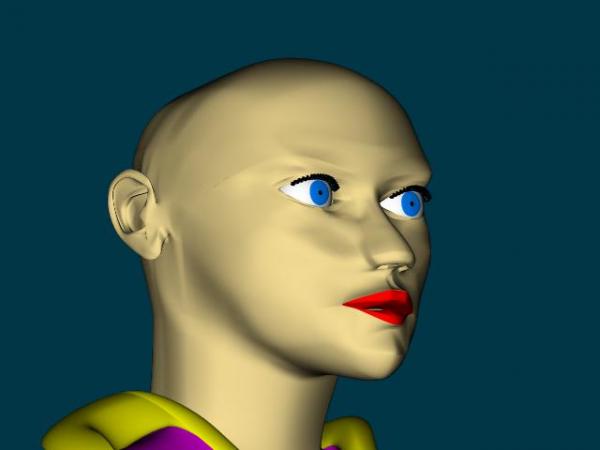

I'll post this here because my avatar still shows up here on the forum. It could go in the "head tutorial" though. Anyway, I got the ears attached to Vera's head and it's looking pretty good I think. But just before I copied the left ear that I was modeling to the right, I thought... "Don't mess this up, save a copy in this state before it all turns to trash" or "This would be a really neat addition to the head tutorial since what you put there before didn't have any ears" and "Don't share, you worked hard on that, keep it to yourself". But Vera's getting a whole new makeover to go with her new ears. The attached model is in a state where the ear(s) could still be changed, but you can see by the pictures it's easy to copy the right ear and attach to the left side of the head. The model should do most of the talking. I'm using FL Studio 12 to make some music, back to timing the bpm to fps thought. Generally making it to go in whichever direction one may want to (Android app or YouTube video). But the ears are huge! Well, no, I'm not saying... (Oh yeah, she can hear now...) As far as the app, I'm thinking just Music, Dance, Effects, Light show. Like MTV used to put out videos. Nothing more. Well a little more but not trying to turn it into a game. Maybe a way to vote on what one likes? But, I want a sustainable format, open to improvement. ie. "this is how to put a package together, stick it on the website, and see if anybody downloads it". And if nobody does, make a better one. But there's nothing to see (or hear) right now. Well maybe you can hear my WIP song! 1 bar = 4 beats, so at 90 bpm and at 12 fps, 1 beat = 0.67s = 8 frames, right? Or, at 120 bpm 1 bar = 2s, so a beat would occur every 6 frames, right? And every where in between, I mean it's perfectly simple. Like I'm trying to figure out how to model (how many times do they play the riff then hit the drums then cut the riff then repeat which part) a song, and it is apparently set up at 130 bpm, so... "which frame does the light flash on ? ... and then off ?" I think that stuff can be figured out, but when I get back to programming the app, I'm worried about getting it all to play together properly timed without a glitch. But still a work in progress... enjoy the ears for now. Vera-3.mdl Song1-B.mp3 -

I would pattern the orbitron after the atomic model with six (6) sensors of any sort attached to the "electrons". A magnetic charge at the nucleus of course. Also at the nucleus would be a transmitter. Locate the specific nucleus from a "land" station, download the data from the sensors. Now, electrons "supposedly" spin as well as revolve around the nucleus. Now that's some fun math and likely very naturally dictated. My guess is a Helium atom's electron doesn't spin, why, well because the moon doesn't. So starting with a Hydrogen model... you have 12 sensors attached to 2 "electrons" taking pictures or sensing data. Then you have the central station interpolating the position of the "atom" and thus the actual direction of each sensor. While the electrons spin and take "photos", it could be interpolated the actual bearing and point of each image (shot, picture, snap, whatever). The math is head-spinning but at it's root just basic sine wave/rotations/vectors. But it would give a 3D "blow-up" of a remote location. Now if that could be used to sense a sudden change in temperature and release a suppression, it could be useful in certain situations. Anyway, I am thankful for the imaginary virtual exercise to visualize something. Do you see it? It's not in metric is it?

-

I saw the movie Predator if that helps. Combining a heat sensor with OpenGL would sum it up. But then why stop at heat when you can click your smart watch and switch to infrared? Kthdthdthdth! And let me test it out so I can get that pesky soldier boy. Shwoowm! Friggin' booby trap he got me with. Finding a path to tinker with all OpenGL can do is the hard part. They even have code for detecting if your finger is hovering over the screen and not yet touching it. Just don't do the brainwave sensor cause then you have to listen to everyone complain. Otherwise, your imagination seems to be reaching out a bit too far. Have you seen the laser measuring tools? I mean, how does that work? "It bounces a laser beam and measures the time it takes to return" "Yeah! at the speed of light, and within a fraction of a millimeter?!" But it's just a little box. Can you take it apart and figure it out? I doubt it. Why does a laser need to be a beam? They have those cameras that take a virtual 3D image and realtors really picked up on it. I think it would take a lot of duct tape, etc. to build the prototype...somehow utilize a mini orbitron...At least there's wifi so it's not a mess of tangled cables. But the best scenario is getting "bought out" by the gov't, never to be heard from again. After an episode of The Running Man. Glad I'm just trying to figure out how to make music right now.

-

I noticed the models didn't move in that video, but obviously were 3D meshes with effects applied with a 'not so sure how done' background. Not that it didn't look better than A:M's Shaded View when modeling, but it seemed focused on creating a background with realistic lighting to match how the character (or gun) reflects light, with several other factors involved (understood) at the same time. Seems to me, an object (ie. model, character) is being rendered on a "layer" on top of the background with the same lighting effects applied, like green screen with lights in one location matching the other. The software looks very similar to 3DStudioMax as well, which I believe is Autodesk which would make sense as far as using layers (AutoCAD uses layers extensively to draw things on top of others for viewing clarity). 3DStudioMax was big on polygons and that's an issue when exporting objects from A:M. I think AMTex worked well for DirectX files, but there is the issue of how 5-point patches (and tying a spline into another without a control point...sorry my vocabulary has been in the attic for awhile) without showing up with gaps. Your software apparently uses a file to represent a 3D object and render it. What is the file format? Is it OpenGL which apparently doesn't have a similar format as Xfile, rather utilize a class (notso simple java file in Android) for the object? I'm not familiar with Mocap, but pre-rendered keyed frames would already have lighting applied, no? A real time engine that incorporates pre-rendered work as well as 3D models is my goal, but since things changed to Android (or Ios), the next best one would be written in Java with OpenGL ES, imho. But since we're on the A:M Forum, how does an A:M user put their models into such a program for benefit. My interpretation is that their best bet is to render a character's animations with a flat (black) background. and the surroundings would be filled in by others? Character animation, again in my humble opinion, is the hardest part of it all. It's the "content" that is so hard to find in abundance. Create a character, sure, make it dance and sing and appeal to an audience, hello? Bone rotations, Muscle motion, keyframes, timed to a soundtrack that exists in one's imagination. Although, music that is "good enough" is fairly simple, until you need to time it to the animation it's created for. I guess I'm just spinning my own wheels here, but I don't see what I want to animate until I'm hearing a good song. MTV was so influential before it became [crap]. Even though there were bad music videos, the concept adapts well to amateur animators, as in a conceivable time frame to put forth something. If there was help to throw in a background, and just "ship it out", oh and throw in the music, it would be a better approach, I think, that trying to create that masterpiece that never gets done. Sort of put what's existing to exercise. Just throw that crap you got out there... but... if it can be "fixed up" with a fantastic background? Until the best "real time engine" is at our disposal (one based on frame rate rather than max frames per second would be my approach), let's start sticking it on an app. Even if it's crap. Just to get started. You would be good at promoting such a thing, yes? Done with "Real Time Technology" by [your company name here]! Regardless. I need to make base models (without restraints) that can be adapted to share the same actions, but look different. And they need to be pretty, and worst case scenario, ready for the stripper show app. Or worse. That whole does art reflect life concept.

-

Is motion capture at all related to this? I remember motion capture involved taping a bunch of "points" to yourself and animating in "real time". Then Kinect came out and basically said that can be done wirelessly. I don't see anything from that video that shows anything more than a pre-rendered animation. I can see what detbear is saying. Right now, you can play an action in shaded mode and tinker with the properties, bone movement, or whatever in real time, but it's usually a mess, and the need to go back and make adjustments is still there. When you say "Real Time", it implies to me that you want to have an animated character that you can display in a format over the internet and communicate in some fashion. Like Twitter with visual, or Skype with a mask. I suppose if you're in the movie business or something similar, you would probably laugh at my naïve take on this topic, but it reminds me of Civil3D being the most biggest [bad grammar on purpose] thing to hit the Civil Engineering industry, when it's just BS. It seems there would be too many things to have to adjust in real time to make it make sense. Are you thinking of making a 1.5 hour movie in 1.5 hours? That's feasible if the plot is to take a day in the life of John Doe and make a film of it, I suppose. I think you're going to reply with an explanation that it's an editor. But I can dance better in real time by a long shot than I can actually animate it. So I'm pretty interested on anything you got.

-

I think she would be better off using A:M to enhance her interest in another career path. Like entymology, there is a big shortage of skeletal layouts for every insect known to mankind. Anything but thinking a college is going to teach her any more than can be learned at will with free tutorials, advice, etc. Game programming, etc. has taken a turn towards mobile devices or Xbox/PlayStation. You do not need to go to a college or university to learn how to develop for these "systems". If you do a little research, you'll find all the information you need on the world wide web. If she's going to pursue a college degree, use it in a way that gives her a career path that is in demand in the "real world". It will never take away from her interest in 'computer painting' (I just coined that...Boom!), but she could find herself very upset with life in the future trying to pursue a phantom industry, possibly getting lucking to land a job with a sign company utilizing Adobe Suite. I think my comments reflect what others were saying. It's also very hard to tell someone the reality behind their dream. I'm 43 and I still get excited about things that I think are going to make me [a way to be financially independent], but they are usually a dead-end from the start. And nobody can tell me I'm wrong. The concept of just finding a career in the game industry is worse in my opinion, because I don't believe it is actually there. Again, the tools to pursue her goals are basically free or available at low cost to pursue. A career would be better directed toward things you find in your everyday life (doctors, lawyers, accountants, engineers, etc.) and... wait... (cashiers, stockers, mechanics{should be in the upper category, sorry}, nurses...), and such. Best wishes to your daughter's future. I've noticed in my experience that having some artistic 'crap' and programming 'crap' work very well in interviews for jobs that are unrelated to the ""industry. Breaks the ice, and makes you look like someone that might be more interesting to work with. But it will end there... at the interview. Find that job, and pursue your artistic goal on your own time. If she likes football, she could model a football helmet, then use a variety of decals, then try to sell it (without the choke hold of working for a company, in which case the idea, design, and material would belong to them). etc.

-

You say you are looking to "rig" this model. There is definitely a basic rigging layout used for most models, etc. But it would not be out of the realm to come up with a rigging system that is a bit different, I would think. So, perhaps due to muscle motion problems and such, your particular model may work better with a new layout. But if you're using the same old ( bone setup...I'd like to see how it's done. I did my models and stuff with no constraints. When I tried to tie into existing models and stuff, it was always this target and that constraint that made it messy. I would think the model you have there would be more vocal and only need to animate when he frequently coughs.

-

So a digital version of the ol' Operation "board" game...I get it. Why not a Hungry Hungry Hippos app? But then...it would work better with a big square pad...with holographic projection (glasses not included:). I like the "Top Secret" approach. Don't tell anyone anything! Seriously! Let them hack it. Then you're dealing with the real McCoy. Smart. I've got a JavaScript learning book, but not sure if I should waste time with it at this point...It's like 8 years old. Oh! Battleship app! I'm sticking with the simple (ha!, that's still just so funny!...simple) app to play animations. Sort of like something that folks could just veg out and watch...as they do with TV. So it can't be crappy the content, really...considering the competition. Still, the ultimate is riding in the Millenium Falcon shooting Tie-Fighters. I just want in on the sound effects.

-

So lets see the model already. Really, I'm eager to see what you have come up with. Not just a big executive decision to use Unity.

-

Well then, we'll start learning Javascript. Or as it's called in Unity...UnityScript. ...Sucks! Not gonna do it!

-

Forgot to mention how warm and fuzzy I felt when you said you know me

-

This comes out of the SP Renderer...just to show how easy (ha!) it is to adjust numbers in order to change the dimensions and quantity of sprite sheets,,,the Renderer is basically the engine for an app. Everything else is basically long nights for disrespected programmers or something. The project doesn't pop up like it used to and that's where I became fearful that it could just disappear by means of hackers, etc. Hence, the desire to share...bla bla bla..l'm not against making money here... package com.starplayer; import javax.microedition.khronos.egl.EGLConfig; import javax.microedition.khronos.opengles.GL10; import android.opengl.GLSurfaceView.Renderer; import android.os.ConditionVariable; public class SPGameRenderer implements Renderer{ private SPButtons buttons0 = new SPButtons(); private SPProps props0 = new SPProps(); //private SPProps props1 = new SPProps(); //private SPProps props2 = new SPProps(); //private SPProps props3 = new SPProps(); //private SPProps props4 = new SPProps(); private SPDancer dancer0 = new SPDancer(); private SPDancer dancer1 = new SPDancer(); private SPDancer dancer2 = new SPDancer(); //private SPDancer dancer3 = new SPDancer(); //private SPDancer dancer4 = new SPDancer(); public static int ButtonFrame = 0; public static int PropSheet = 0; public static int PropLine = 0; public int PropFrame = 0; public static int DancerSheet = 0; public int DancerLine = 0; public int DancerFrame = 0; private long time = 0; private long time2 = 0; private void Play (GL10 gl){ //************************************************************** Props gl.glMatrixMode(GL10.GL_MODELVIEW); gl.glLoadIdentity(); gl.glPushMatrix(); gl.glScalef(1.0f, 1.0f, 1.0f); gl.glTranslatef(0.0f, 0.0f, 0f); gl.glMatrixMode(GL10.GL_TEXTURE); gl.glLoadIdentity(); //flip image gl.glRotatef(180.0f, 1.0f, 0.0f, 0.0f); gl.glTranslatef(0.0f, 1.0f, 0.0f); //Props 12x12 sprite sheet 5 sheets //12fps gl.glTranslatef(PropFrame/12.0f, PropLine/12.0f, 0.0f); switch (PropSheet){ case 0: props0.draw(gl); break; //case 1: ///props1.draw(gl); //break; //case 2: //props2.draw(gl); //break; //case 3: //props3.draw(gl); //break; //case 4: //props4.draw(gl); //break; } gl.glPopMatrix(); gl.glLoadIdentity(); //*********************************************************** Dancer gl.glMatrixMode(GL10.GL_MODELVIEW); gl.glLoadIdentity(); gl.glPushMatrix(); gl.glScalef(1.0f, 1.0f, 1.0f); gl.glTranslatef(0.0f, 0.0f, 0f); gl.glMatrixMode(GL10.GL_TEXTURE); gl.glLoadIdentity(); gl.glTranslatef(0.0f, 0.0f, 0f); //flip image gl.glRotatef(180.0f, 1.0f, 0.0f, 0.0f); gl.glTranslatef(0.0f, 1.0f, 0.0f); //Dancer 12x12 sprite sheet 5 sheets gl.glTranslatef(DancerFrame/12.0f, DancerLine/12.0f, 0.0f); switch (DancerSheet){ case 0: dancer0.draw(gl); break; case 1: dancer1.draw(gl); break; case 2: dancer2.draw(gl); break; case 3: //dancer3.draw(gl); break; case 4: //dancer4.draw(gl); break; } gl.glPopMatrix(); gl.glLoadIdentity(); //***************************************************************** Buttons gl.glMatrixMode(GL10.GL_MODELVIEW); gl.glLoadIdentity(); gl.glPushMatrix(); gl.glScalef(1.0f, 1.0f, 1.0f); gl.glTranslatef(0.0f, 0.0f, 0.0f); gl.glMatrixMode(GL10.GL_TEXTURE); gl.glLoadIdentity(); //flip image gl.glRotatef(180.0f, 1.0f, 0.0f, 0.0f); gl.glTranslatef(0.0f, 1.0f, 0.0f); //gl.glTranslatef(0.0f, 0.0f, 0.1f);// Adjust z-value for draw order //Background 12x12 sprite sheet 5 sheets gl.glTranslatef(ButtonFrame/12.0f, 0.0f, 0.0f); buttons0.draw(gl); //reset matrices prior to next render gl.glPopMatrix(); gl.glLoadIdentity(); //************************************************************************************************ // Update 12fps Frame Locations 5 sheets if ((System.currentTimeMillis()- time) > (1000/12)){ //PropFrame+=1; DancerFrame+=1; //if (PropFrame>=12){PropFrame=0;PropLine+=1;} //if (PropLine>=12){PropLine=0;PropSheet+=1;} //if (PropSheet>=5){PropSheet=0;} //change value (1) to # of sheets if (DancerFrame>=12){DancerFrame=0;DancerLine+=1;} if (DancerLine>=12){DancerLine=0;} //DancerSheet+=1;} //dancer sheet adjusted with L button //if (DancerSheet>=1){DancerSheet=0;} time = System.currentTimeMillis(); } // Update 4fps Props if ((System.currentTimeMillis()-time2) > (1000/4)){ PropFrame+=1; if (PropFrame>=12){PropFrame=0;} time2 = System.currentTimeMillis(); } //**************************************************// } @Override public void onDrawFrame(GL10 gl) { gl.glClear(GL10.GL_COLOR_BUFFER_BIT | GL10.GL_DEPTH_BUFFER_BIT); Play(gl); gl.glEnable(GL10.GL_BLEND); //gl.glColor4f(1, 1, 1, 1); //gl.glBlendFunc(GL10.GL_ONE, GL10.GL_ONE); /* **** */ gl.glBlendFunc(GL10.GL_SRC_ALPHA,GL10.GL_ONE_MINUS_SRC_ALPHA); //gl.glBlendFunc(GL10.GL_SRC_COLOR, GL10.GL_SRC_COLOR); } @Override public void onSurfaceChanged(GL10 gl, int width, int height){ gl.glViewport(0, 0, width, height); gl.glMatrixMode(GL10.GL_PROJECTION); gl.glLoadIdentity(); gl.glOrthof(0f, 1f, 0f, 1f, -1f, 1f); } @Override public void onSurfaceCreated(GL10 gl, EGLConfig config){ gl.glEnable(GL10.GL_TEXTURE_2D); gl.glClearColor(0, 0, 0, 0); gl.glClearDepthf(1.0f); gl.glEnable(GL10.GL_DEPTH_TEST); //gl.glDepthFunc(GL10.GL_LEQUAL); gl.glDepthFunc(GL10.GL_ALWAYS); gl.glEnable(GL10.GL_BLEND); gl.glColor4f(1, 1, 1, 1); //gl.glBlendFunc(GL10.GL_ONE, GL10.GL_ONE); /* **** */ gl.glBlendFunc(GL10.GL_SRC_ALPHA,GL10.GL_ONE_MINUS_SRC_ALPHA); //gl.glBlendFunc(GL10.GL_SRC_COLOR, GL10.GL_SRC_COLOR); props0.loadTexture(gl, SPEngine.PROPS_0, SPEngine.context); //props1.loadTexture(gl, SPEngine.PROPS_1, SPEngine.context); //props2.loadTexture(gl, SPEngine.PROPS_2, SPEngine.context); //props3.loadTexture(gl, SPEngine.PROPS_3, SPEngine.context); //props4.loadTexture(gl, SPEngine.PROPS_4, SPEngine.context); dancer0.loadTexture(gl, SPEngine.DANCER_0, SPEngine.context); dancer1.loadTexture(gl, SPEngine.DANCER_1, SPEngine.context); dancer2.loadTexture(gl, SPEngine.DANCER_2, SPEngine.context); //dancer3.loadTexture(gl, SPEngine.DANCER_3, SPEngine.context); //dancer4.loadTexture(gl, SPEngine.DANCER_4, SPEngine.context); buttons0.loadTexture(gl, SPEngine.BUTTONS_0, SPEngine.context); gl.glMatrixMode(GL10.GL_MODELVIEW); gl.glLoadIdentity(); gl.glPushMatrix(); gl.glScalef(1.0f, 1.0f, 1.0f); gl.glTranslatef(0.0f, 0.0f, 0.0f); gl.glMatrixMode(GL10.GL_TEXTURE); gl.glLoadIdentity(); //flip image gl.glRotatef(180.0f, 1.0f, 0.0f, 0.0f); gl.glTranslatef(0.0f, 1.0f, 0.0f); //gl.glTranslatef(0.0f, 0.0f, 0.1f);// Adjust z-value for draw order //Buttons 12x12 sprite sheet 5 sheets gl.glTranslatef(ButtonFrame/12.0f, 0.0f, 0.0f); } public void onViewPause(ConditionVariable syncObj){ props0 = null; //props1 = null; //props2 = null; //props3 = null; //props4 = null; dancer0 = null; dancer1 = null; dancer2 = null; buttons0 = null; syncObj.open(); } } I can edit this with better direction, but someone should download Eclipse with Android Developer Tools and get things up and running with a basic 1"how-to" first, ...IMHO> What is Love is on the radio.ll ... got to go!

-

Don't forget to say it's on Google Play as monoboom starplayer if you do a quick search for the app in the media category. And of course the content was done in about 4 hours where as the program took about 2 years. I suppose there has to be a business plan, etc. behind something of this sort, which sometimes befuddles me as it's done and ready to test out (play with), and develop further. But the business plan is basically MTV on the phone, with animation. Old songs, new songs, real video layered with computer graphics. It's the basic app to get off the ground and all apps share the basic Android programming behind it (well Android apps as far as I know but Ios is probably similar). I've also noticed that some development tools can be just as hard to use once one gets into scripting in order to make a unique app, yet they will charge 25% if the app gains popularity. Either way, I hope we can play with it soon. It might be fun to convert to web pages as well.

-

Games are for kids (well, adults too), but intended to open a door. I think the "game" is getting downloads, ie. attention. Unity takes 25% once you break through that barrier. I don't understand why any investment type would contact you for "game" development unless they were more interested in the animation... ...and Point 5. You're about to lose all of the development I've done, while you try to find an easy way to make games via some development tool (written in C++). I'm losing the ability to restore my project in Eclipse,. ie. It;s about to disappear and hackers have all of the information and work I put into it. I'm being pushed out. That's how it goes.